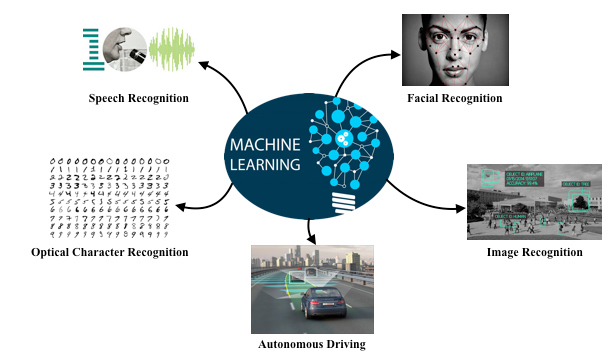

Machine Learning Applications

Machine learning is the sub-field of Artificial Intelligence (AI) that gives computers the ability to learn without being explicitly programmed. Evolved from the study of pattern recognition and computational learning theory in artificial intelligence, machine learning explores the study and construction of algorithms that can learn from and make predictions on data – such algorithms overcome following strictly static program instructions by making data driven predictions or decisions, through building a model from sample inputs.

Machine learning is the sub-field of Artificial Intelligence (AI) that gives computers the ability to learn without being explicitly programmed. Evolved from the study of pattern recognition and computational learning theory in artificial intelligence, machine learning explores the study and construction of algorithms that can learn from and make predictions on data – such algorithms overcome following strictly static program instructions by making data driven predictions or decisions, through building a model from sample inputs.

Research Members

Sajjad Taheri

Master of Science

Doctoral Candidate

Dominic Henze

Master of Science

Doctoral Candidate

Jan Ole Johanßen

Master of Science

Doctoral Candidate

Jan Philip Bernius

Master of Science

Doctoral Candidate

Paul Schmiedmayer

Master of Science

Doctoral Candidate

Projects

Theses Offered

Theses In Progress

Theses Finished

A manual-procedural activities (MPA) involves following the steps of a given workflow for manipulating the physical world. Examples include manual assembly, repair and maintenance, different crafts, cooking, etc. To learn an MPA the trainee needs to master both the steps of the procedure and the hand skills required for manipulating physical objects and the using the tools. TUMA: An Intelligent Tutoring System for Manual-Procedural Activities supports trainees in learning an MPA.

Multiple topics available in context of the TUMA project. For details of the topics please see my chair web page.